A mixture of different tips and tweaks for various Citrix products. If I come across others that I feel are of use I will add them to this post. Also do use the comments section to add suggestions of your own and I will publish them to the post.

♣ .NET

♣ Anti-Virus exclusions and recommendations

♣ App Layering

♣ App-V

♣ Citrix Policies

♣ Citrix Receiver for Windows

♣ Cloud

♣ Director

♣ Domain Infrastructure

♣ Golden Image/Image Performance

♣ Graphics

♣ Group Policy

♣ Hardware/Hypervisor

♣ Hotfixes

♣ Licensing

♣ Logon Times

♣ Machine Creation Services

♣ Misc

♣ NetScaler

♣ Printing

♣ Provisioning Services

♣ PVS 7.x Server Anti-Virus exclusions

♣ PVS vDisk/Target Device 7.x Anti-Virus exclusions

♣ Skype for Business

♣ SQL

♣ StoreFront/Receiver for Web

♣ Updating PVS Target Device Software/VM Tools

♣ User Environment Management

♣ User Profiles

♣ VDI or RDSH

♣ Workspace Control

♣ XenApp and XenDesktop

.NET

- If you have upgraded .NET or installed updates through patching to a base image I always recommend running NGEN to manually regenerate the Native Image Cache assemblies before pushing the image to production. The ngen.exe update command achieves this. Take note to run NGEN on both 32/64bit Framework directories.

- Use the ngen queue status command to check the status of the NGEN task. Once the status check returns The .NET Runtime Optimization service is stopped message NGEN is complete.

Anti-Virus exclusions and recommendations

- See https://www.citrix.com/blogs/2016/12/02/citrix-recommended-antivirus-exclusions/ for tips on excluding files and processes for StoreFront, Delivery Controllers, VDA etc.

- You should consider host level Anti-Virus especially when you have many VDAs. Bitdefender is available for XenServer 7.x and the likes of Sophos vShield can be installed on ESX or Hyper-V. Deploying this technology will increase VM density per host.

App Layering

- Make optimisations in the Platform Layer, not the OS Layer. For portability, you want to make the OS Layer as generic as possible i.e. little configuration other than a base Operating System, patching and .NET Frameworks. This allows you to easily use the same OS Layer across mulitple different hypervisors instead of having optimisations that disable Hyper-V services in an OS Layer that you used to use with vSphere but now want to use with Hyper-V.

- Another reason to keep optimisations in the Platform Layer is to ensure these optimisations always apply. For example, you don’t want an administrator enabling the Windows Update service or reversing an optimisation in an Application Layer. That would take priority over the optimisation if it was set at the OS Layer level, but because we have it set at the Platform Layer level we can be sure no mistakes like this will undo the correct optimisations.

- Any modifications made to HKCU in an Application or OS Layer are not captured.

- You can modify NTUSER.DAT in one layer. If you edit in multiple layers, the priority layer wins. That priority layer can be the Platform Layer, the App Layer from most recent creation date to oldest, and then the OS Layer.

- You can use Local Group Policy editor in multiple layers but the highest priority layer which contains changes to Local Group Policy will overwrite any lower priority layers.

- When performing ELM upgrade, you don’t have to upgrade the Machine OS Tools version within the OS Layer. The next time you publish an image out from the new ELM Appliance the newest Machine OS Tools are automatically injected into the image. If you are looking to upgrade scripts, you will have to create a new OS Layer version and replace the scripts that come packaged with Machine OS Tools.

- App Layering can only merge special keys such as REG_MULTI_SZ together that have been chosen by Citrix. You might find that installing a Single Sign-On provider or Citrix WEM in an Application Layer writes a value to a REG_MULTI_SZ key such as DependOnService and the Platform Layer which also writes to that key overwrites the value set on the App Layer. For an example of the issue where WEM loses its Netlogon service dependency see the bottom of the Install WEM Agent section here https://jgspiers.com/citrix-workspace-environment-manager/#Install-WEM-Agent

- Never adjust the Application Layer size down from the default of 10GB. You can increase the size and the Application Layer will be thin provisioned.

- Do not disable 8.3 Name Creation or NTFS Last Access Time Stamps.

- Install .NET in to the OS Layer. Multiple applications will have .NET as a prerequisite and you don’t want to end up with multiple .NET 4.6 installed across many Application Layers. Also when patching the OS Layer you can include .NET updates at the same time.

- Also install other Windows related dependencies separately in to an Application Layer, such as Visual C++ Redistributable packages which can be used by multiple applications.

- When updating an OS Layer, you should not have to worry about turning on/off automatic updates and enabling the Windows Update service because Windows Update should be disabled in the Platform Layer which isn’t present during OS/Application Layer versioning. This is another reason why optimisations should reside in the Platform Layer. Time will be saved when patching the OS Layer by not having to touch the Windows Update service.

- Join the Platform Layer to the domain but not any other Application or OS Layer. If you need to join the Packaging Machine to the domain when updating an OS Layer or Application Layer that is OK but make sure you remove the machine from domain before finalising the layer.

- Do you need to import registry keys to disable EULA, other prompts or updates? Make sure before finalising a layer that you have reviewed any customisations and best practices that need to be made to offer the best user friendly experience once the layer is in production.

- After an application install, check to see if the application has created Scheduled Tasks to update the application or collect usage statistics, or for any new service installed that can be disabled. It is easy to compare the list of services before and after using PowerShell. If services have been installed that are not operationally required, it is best to disable these before finalising the image. Likewise if Scheduled Tasks have been installed that are unwanted, disable them also. You don’t want to end up with an image full of wasteful Scheduled Tasks.

- Are there any new Active Setup entries created after an application install and if so do you need them? Active Setup adds to logon times. Adobe Reader creates Active Setup keys.

- Before finalising any layer, run my Application Preparation script which clears our temporary files, Event Logs, runs an NGEN recompile and more – https://jgspiers.com/citrix-app-layering-preparation-script/

- Install applications from a share or an ISO rather than downloading them to your desktop to keep the layer size as small as possible. You don’t want to download a 3GB ISO only to delete it afterwards, you’ll not reclaim that space. Download the media on a different machine beforehand.

- Local printers are installed in HKLM which can be captured in a layer. Network printers are installed in HKCU and not captured. You should use other methods such as Citrix WEM or Group Policy to assign network printers to users.

- Your main Hypervisor tools should go in to the OS Layer. App Layering uses scrubbing techniques to remove any unused Hypervisor drivers during image deployment. If you have a secondary Hypervisor you can install those tools in to the Platform Layer for that Hypervisor type.

- You can only uninstall Windows 10 UWP applications in the OS Layer.

- To create users and groups, use the OS Layer. Any user and group created in Application or Platform Layers are not captured.

- If Elastic Layering is adding a long time to your logins, review the Elastic Layer log file which resides in %ProgramData%\Unidesk\Logs\ulayersvc.log

- When you enable Elastic Layering on an Image Template and then deploy the image, 20GB will be added on to the image. This is why you’ll sometimes see PVS vDisks deployed by ELM with different sizes even though they contain the same layers.

- You can not set registry permissions in any layer other than the Platform Layer. Atleast when you are making domain user/group permission changes. The Platform Layer is the only domain joined layer so should have no problems with changing permissions on registry objects.

- There is a hard limit of 1000 layers per Image Template.

- You can mount a maximum of 2558 Elastic layers.

- Sometimes an application has to go in to the Platform Layer to work properly. This is common with Imprivata for single sign-on.

- Always run ngen.exe update from the .NET versioned subfolders within Framework and Framework64 folders before finalising a layer. Ngen normally begins when a machine is idle. If you don’t run NGEN before finalising an image you may end up deploying a production image to 100 VMs which then runs an NGEN each time the VMs boot. Refere to my App Layering Preparation script which runs NGEN automatically for you.

- When creating a layer, anything you do is captured. That may be opening a web page to download a file. You’ll end up with cookies and temporary internet files. It is advised to do as little as possible to a layer to get the job done. Download media before creating a layer.

- If applications such as Adobe Flash and Java or drivers such as printing or scanning drivers are going to be used by most/all desktops it is OK to bundle them together in a single Application Layer. If you do bundle other applications together, plan carefully and consider the risks such as if you needed to re-create the layer you would have to reinstall potentially several applications rather than just the one.

- If an Application Layer assigned Elastically adds 10 seconds on to logon times on an RDSH host, that one user takes the hit and other subsequent users logging on who use that same layer elastically will receive quicker logons as the layer does not need mounted to the desktop again, until next reboot.

- Also when multiple layers are attached to the same machine elastically, they could add somewhat different times to the logon compared to if they were singularly attached to desktops. This is because some of the Elastic Layering operations only need to be performed once, which has a positive effect if multiple layers are attached to one desktop.

- Run SetKMSVersion.exe within the OS Layer to correctly configure the desktop for KMS licensing.

App-V

- Enable SCS (Shared Content Store) particularly with non-persistent machines. When using SCS applications will not be cached on disk, but rather streamed from App-V publishing servers. Given that the VDA and App-V servers normally reside within the datacentre, streaming should work well over fast, low latent links. Some application data known as Feature Block 0/Publishing Feature Block will be cached, these files consist of application icons, scripts and any metadata required to run the application. The application data size will be small.

- As of XenApp and XenDesktop 7.8, App-V Streaming and Management servers are not needed for integrating App-V with Citrix studio. Instead you point Studio directly at the App-V package share. This is called Single Admin Mode.

- According to Tim Mangan, you should install/enable the App-V client on to a gold image before installing the VDA software. Doing this will allow the VDA to detect that App-V is present and install some additional components.

- As of XenApp and XenDesktop 7.14, the CtxAppVCOMAdmin account is no longer created and used. Instead, this has been replaced by a system service called CtxAppVService. This is useful to know when using Citrix App Layering as it is no longer a requirement to manually create the user account in an OS Layer and reset the COM+ object password.

- It is recommended to pre-add packages to your gold image to decrease the amount of processing and time it takes to publish packages to users. Using Citrix App Layering, I like to run a global pre-add to an Application Layer.

Citrix Policies

- Make sure to go through each policy setting, review, and disable things you do not need. Settings like audio redirection and client drive mapping is enabled by default but does not suit every environment. Also be sure not to apply policies that do not apply to your VDA version. I have come across sites for example with Server OS 7.6 VDA installed and the administrator is trying to configure Session Limits through Citrix Policies. Read what VDA version each policy applies to.

Citrix Receiver for Windows

- Citrix Receiver for Windows 4.8+ has the ability to auto-update. Whilst this is advantageous for many, it may be undesired in the enterprise. For more information see https://jgspiers.com/citrix-receiver-windows-auto-update/

Cloud

- Keep the Citrix Cloud status portal URL handy. It’ll show you which services are healthy and if any are not you’ll get service notifications with explanations of the issues. You can also subscribe to updates via email, SMS, Slack or Webhooks – http://status.cloud.com/

- Likewise the Azure status portal shows you service state, disruption information and history of events. You can create your own personalised dashboard – https://azure.microsoft.com/en-gb/status/

- Use Availability Sets in Azure to ensure machines are distributed out across as many Update and Fault Domains on the underlying hardware as possible. This means that when planned maintenance occurs to the Azure platform a portion of machines will be kept online to avoid disruption to service.

- Install pairs of Citrix Cloud connectors within your Resource Location. Citrix periodically will update the connectors automatically but with pairs only one will ever be taken offline at a time.

- Contact Citrix support if your service key has been compromised. Citrix do rotate keys periodically for all Citrix Cloud customers.

- If your forest has multiple domains, Citrix Cloud Connector will discover them.

- Smart Build is a quick and easy way to deploy Proof of Concepts of XenApp and XenDesktop. See https://jgspiers.com/citrix-smart-tools/#Smart-Build

Director

- Install Director on it’s own dedicated machine and see tweaks https://jgspiers.com/tweaking-citrix-director-dash-of-monitoring/

- Director logon duration times can be skewed if you have disclaimers on your Desktop machines. For example, the longer it takes a user to accept the disclaimer, the more time is added on to their logon duration count. Avoid disclaimers if possible and instead move them to the Desktop wallpaper, StoreFront UI, NetScaler UI themes etc.

Domain Infrastructure

- Make sure your Active Directory Sites and Services are properly configured as it is critical authentication takes place against Domain Controllers not on the other side of the world if it can be avoided. Also make sure you have a sufficient amount of Domain Controllers to handle the authentication requests and Group Policy processing, especially at peak logon times when Domain Controllers will be receiving the most requests.

- Avoid multiple group nestings, keep your group membership strategy simple.

Golden Image/Image Performance

- Optimise your image. This practice could be the difference between fitting 100 VMs on to a host versus 150, or the difference between 50 second logons versus 30 second logons. By optimising you will reduce logon times because a lean image can prepare sessions quicker. You will also increase VM density per host as CPU/RAM and IOPs will be lower on a leaner image with less running processes, services and Scheduled Tasks.

- Windows Server 2016 Optimisation Script – https://jgspiers.com/windows-server-2016-optimisation-script/

- Example of how optimisation drops logon times by 20 seconds – https://jgspiers.com/citrix-director-reduce-logon-times/#Optimised-Image

- Windows Server 2012 R2 Optimisation Script – https://jgspiers.com/windows-server-2012-r2-optimisation-script/

- Windows Server 2008 R2 Optimisation Guide (states XenApp 6.5 but can largely relate to 7.x) – http://support.citrix.com/article/CTX131577/

- Windows 7 Optimisation Guide – https://support.citrix.com/article/CTX127050

- Unofficial Windows 10 Optimisation Guide – https://support.citrix.com/article/CTX216252

- How to restrict machine drives (A, B, C, D etc.) from being visible to users in an ICA session. Note that Citrix Workspace Environment Management should be your go-to product for locking down user sessions – https://support.citrix.com/article/CTX220108

- Remote old device drivers (ghost devices) from your Gold Image periodically. You will be surprised at how many of these devices can build up – https://jgspiers.com/remove-unused-device-drivers-from-citrix-gold-image/

- Keep your image up to date at all times with patches. Not only does this protect your machines from vulnerabilities but you generally get better performance from patched Operating Systems.

- Before deploying an Operating System, make sure it is supported by Citrix. For example, Citrix do not support any non-Current Branch for Business (non-Semi-Annual Channel) Windows 10 versions.

- It is of paramount importance that you perform hardening excercises on your gold image before deploying to production. If your systems were to be hacked, you want to make it as hard as possible for the hacker to break out of your XenApp/XenDesktop machines and reach out to other systems. Citrix have published a hardening guidance white paper here – System Hardening Guidance for XenApp and XenDesktop

- It is recommended to uninstall and then install again the VDA software on your gold image after installing/upgrading hypervisor tools. This is because hypervisor tools install their own graphics drivers etc. which can clash with those of the VDA.

- Users spend more time working within a web browser than ever before. This is due to the rise in digital content and applications now delivered through the web. Since the web browser is a popular application in the workplace it is obvious that it will be a big consumer of CPU which affects host scalability and density. Investing in GPU is a solid way to reduce CPU consumption by offloading graphics on to virtual GPUs, not to mention the better user experience that will be provided. Another way to cut the CPU consumption of web browsers is to implement ad-blocking software in to the gold image. Due to the rise in web content and possibility to reach so many people, billions of dollars is spent by companies placing advertisements on websites such as YouTube and Facebook. For more information on how ad-blocking reduces CPU see https://jgspiers.com/how-internet-explorer-is-impacting-your-citrix-environment/

Graphics

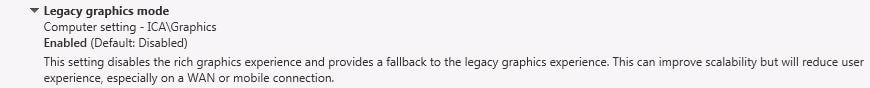

- Running Legacy OS (Windows 7/2008 R2 and earlier) and getting poor performance? Enable Legacy Graphics Mode. Seriously, this gives a good boost in performance and is designed for the older OS models.

- Use GPUs or enable popup blockers to prevent your CPU being hit by advertisements and rich multimedia content. See https://jgspiers.com/how-internet-explorer-is-impacting-your-citrix-environment/ for examples of how CPU can be affected and how GPU/ad-blockers help.

- Read the following post for a low down on Citrix graphics modes https://jgspiers.com/citrix-thinwire-hdx-graphics-modes-what-is-right-for-you/

- Plan to include GPU in your deployments. Whilst GPU was historically needed for drawing and imaging software, basic applications such as Chrome, IE, and Office all now make use of GPU. Web applications running HTML5 are becoming more common than ever and GPU is needed to process HTML5 content. Windows 10 has a higher graphics requirement than any other previous desktop Operating System. If you don’t include GPU in your deployments you may find yourself with reduced VM density per host and a reduced user experience. If you do include GPU in your deployment, CPU consumption will lower, allowing you to host more VMs per server and provide your users with a great user experience.

- NVIDIA recently reported that graphics accelerated applications have doubled since 2011.

- Systrack recently analysed data to find that Firefox, Excel, Office, Chrome and more applications running on Windows 10 all consume more GPU than what they did when running on Windows 7. See Elevating User Experience through GPU Acceleration

- H.264 provides users with a great graphical experience however consumes more CPU on both the VDA and end-user device during encoding and decoding operations.

- XenApp/XenDesktop 7.11 introduced selective H.264 which can compress actively changing regions of the screen and use Thinwire+ for the remaining content for example static pictures and text. This setting provides the best balance between server density (normally a 10% gain on full screen H.264) per host and user experience for multimedia content and the like. You should implement this option and then evaluate the performance, user experience and resource consumption. Based on results you can later tweak to find the best fit for your environment.

- XenApp/XenDesktop 7.11 introduced H.264 hardware encoding which offloads H.264 compression on to the GPU for machines using supported NVIDIA GPU with HDX 3D Pro. Using this feature reduces CPU consumption which in turn increases host scalability.

- Make use of the built-in templates that come with XenApp/XenDesktop 7.6 FP3. There are templates for Optimized WAN, High Server Scalability and Very High Definition User Experience. These templates are a great starting point to configuring graphical policy settings to meet your use case.

- Make sure you regularly check for and update your graphics drivers. New drivers contain bug fixes, security addressments and performance enhancements.

- Citrix recommend that HDX 3D Pro machines have 4vCPU and 4GB RAM minimum.

- Consider if you want to cap the frame rate VDAs use to a lower number. The lower the frame rate the better density per host however at the expense of user experience.

- Users spend more time working within a web browser than ever before. This is due to the rise in digital content and applications now delivered through the web. Since the web browser is a popular application in the workplace it is obvious that it will be a big consumer of CPU which affects host scalability and density. Investing in GPU is a solid way to reduce CPU consumption by offloading graphics on to virtual GPUs, not to mention the better user experience that will be provided.

Group Policy

- With Group Policy you want to reduce the number of GPOs applied to Citrix machines as much as possible. One way of doing this is to merge as many settings as possible in to one Group Policy object rather than have seperate policies spread out across desktops. Windows will take much less time to apply one GPO than it will five separate ones that could have easily been merged.

- Disable unused GPO sections. You may find that your Group Policy objects have only computer or user settings defined. Disabling the unused computer or user section can speed up the time to apply these policies. This is achieved via GPMC.

- Assign logon scripts to users via GPO’s instead of AD via their AD account (if you use logon scripts however no logon scripts is a goal).

- Note: If you do use logon scripts, do you know how long they take to run? If not, you should check. Personally I think we should all be moving away from logon scripts where possible. You could also test running logon scripts after logon has finished by altering Group Policy setting Computer Configuration-> Administrative Templates -> System -> Group Policy -> Configure Logon Script Delay. This policy setting allows you to configure how long the Group Policy client waits after logon before running the scripts.

- Logon Script processing times can easily be reviewed from Citrix Director.

- Make sure Group Policies are suited to Citrix desktops only. Many Citrix environments receive Group Policy settings designed for physical desktop OS which can affect the performance of virtual desktops. Virtual desktops deployed via Citrix technologies should be controlled in an isolated sense where Organizational Units and Group Policy objects separate these desktops from other machines.

Hardware/Hypervisor

- Size your hardware to cope with peak load. Every environment will have a time of the day (morning, lunch) or day of the week when resource consumption is at its highest so size for this. This ensures acceptable user experience during peak load and even better the rest of the time.

- Take care especially when sizing CPU. It’s most of the time easier to max out CPU than it is anything else.

- Assign more than 2vCPUs per XenApp VM. In the past, many people would have recommended VMs with no more than 2vCPUs. If you needed more, you scaled out. Now the opposite is suggested in that if you assign 4vCPUs or even 6vCPUs to XenApp servers, you can get better performance and of course more user density. Do keep in mind NUMA which is explained further down this post.

- Keep Hypervisors dedicated to RSDH or VDI VMs. Do not mix SQL, Domain Controllers and DHCP servers with VDI for example.

- Never size for average IOPs or network bandwidth. Always size for extra as there might come a time when it is needed.

- CPU over commitment can cause performance issues. I’ve found from testing that a 1.5x to 2.x overcommitment ratio can offer the best performance results. For example, if I have a 20 physical core host, 1.5x oversubscription would be 30vCPU and 2.x would be 40vCPU.

- On the note of hyperthreading, don’t disable it. Hyperthreading allows single processors to behave like two logical processors as two independant threads can run at the same time. This increases performance by better utilising idle resources.

- Determine how many NUMA nodes your hosts have. You can use esxtop on vSphere or xl info -l on XenServer. All Hypervisors these days are NUMA aware. A host with 16 cores and two NUMA nodes means there are 8 cores per NUMA node. In this scenario, you shouldn’t assign any more than 8vCPUs max to any virtual machine. If you assign more, the virtual machine will start to use resources from the second NUMA node causing latency and degradation as this is classed as “remote” memory access.

- Use and enable Cluster on Die when using CoD supported Intel chips. Some chips come with uneven NUMA nodes, for example a box with 14 physical vCPUs and two NUMA nodes consisting of 8 CPUs and 6 CPUs. Cluster on Die when enabled can take a core from the 8 core NUMA node and present it to the 6 core NUMA node, so each node becomes even.

- Virtual machines require more memory than what is allocated for devices such as SVGA frame buffers and other attached devices that the hypervisor has to map through. The amount of extra required memory per virtual machine depends on the number of vCPUs, memory, connected devices and operating system architecture. Over commiting memory to virtual machines can waste memory amd increase the overhead reducing the available memory for other virtual machines. Some technologies built in to hypervisors such as memory compression in vSphere help with overcommitment but in general you do not want to overcommit.

- Use Transparent Page Sharing. A vSphere feature which allows virtual machines with identical sets of memory content to share these pages. This increases memory available on ESX hosts.

- NIC teaming should always be used at a hypervisor level for redundancy. NICs should be uplinked to separate physical switches which provides redundancy at a switch level. NIC teaming in cases can also provide more throughput.

- Use PortFast on ESX host uplinks. Failover events can cause spanning tree protocol (STP) recalculations which can cause temporary network disconnects. PortFast immediately sets the port back to a forwarding state and prevents link state changes on ESX hosts from affecting the STP topology.

- Remove unneeded virtual machine devices such as CD-ROMs and Floppy Drives from virtual machines. This will reduce the amount of resources the hypervisor uses to map such devices through to a virtual machine.

- Use VMXNET3 NICs with vSphere as you get better performance and reduced host processing when compared with an E1000 NIC. Citrix Provisioning Services does not support running virtual machines on an E1000 NIC on ESX 5.x+.

- On vSphere, use esxtop to view NUMA node, local/remote memory access and other statistics to ensure there are no performance issues.

- Keep your hypervisor host firmware and BIOS versions up to date as they will contain any bug fixes, security addressments and performance enhancements.

- The rule of 5 and 10 is a scalability focused article published by Nick Rintalan that describes the process of calculating how many VDI sessions or XenApp sessions you can fit on a server. By taking the total number of physical cores in a box, multiplying that number by 5 for VDI or 10 for XenApp you have the total number of users that you can support on a single box. For more details see Citrix Scalability – The Rule of 5 and 10. Keep in mind that there are many factors that will affect these numbers.

- Example 16 core box running XenApp hosts: 16 * 10 = 160 user sessions per host.

- Don’t forget to account for failure of a hypervisor host in your sizing and diaster recovery plans. For example, a 4 node cluster supporting 400 user sessions must be able to withstand a host failure without compromising on performance and user experience. For this reason it is advisable to have additional hosts.

- Setting Hypervisor Power Management plans to Performance/High Performance is recommeded so that VMs run at the highest clock speeds and you get the most density out of your physical hypervisor hosts. Normally you will find that servers are configured out of the box to be energy efficient. To manage the power settings for example CIMC on Cisco UCS servers can be used to set Energy Performance and Power Technology settings to Performance which is recommended by Cisco when running virtualised workloads.

- Power Management is also configured at the Hypervisor level for example using vSphere. Setting the Power Policy to High Performance is recommended for XenApp and XenDesktop workloads. This policy prevents use of all P-States and C-States beyond C1 and disables Turbo Boost.

- Disable P-States completely and disable C-States past C1. This ia exactly what the High Performance setting set on ESX does. P-States set conditions such as voltage and clock frequency on cores so that they save on power whilst still performing their duties. C-States are a number of different states (C1, C1E, C2 etc.) set on idle cores that effectively set deeper power conservation modes as the core goes further down the list of states. For example state C6 effectively powers off the core. Think of it as a light dimming switch, as the more you dim the light the more power you save. Not all processors support the same C-States. State C0 is when the processor is fully operational. The higher the state number, the lower power consumption and time taken to wake-up.

- If you want to know how many C-States are enabled on XenServer, run command xenpm get-cpuidle-state | grep total | uniq.

- Before choosing a Thin Client for your Citrix estate, make sure it supports H.264, Skype for Business, HDX 3D Pro and any other feature you are interested in using. Devices that are certified by Citrix contain the Citrix HDX Ready logo. Check the Citrix Ready Marketplace for guidance.

- Other peripherals such as Webcams, Headsets, and Microphones should be checked for support by Citrix XenApp and XenDesktop.

Hotfixes

- If you find something is broken in your environment, always check for Microsoft or Citrix download pages for released hotfixes and patches. Run your environment through CIS, Citrix Insight Services.

- Recommended hotfixes for XenServer 6.x – https://support.citrix.com/article/CTX138115

- Recommended hotfixes for XenServer 7.x – https://support.citrix.com/article/CTX225835

- Recommended hotfixes for XenApp/XenDesktop 7.x – https://support.citrix.com/article/CTX142357

Licensing

- Install the license server component on a dedicated machine if possible. License servers cannot share licenses however a single license server is enough to handle sites with thousands of users and servers (can support 10,000 requests at once). If your license server happens to fail your site will go in to a 30 day grace period. This means it is not absolutely necessary to make the license server highly available because you have 30 days to recover the failed server. The license server processing and receiving threads can be modified to increase performance, see http://docs.citrix.com/en-us/licensing/11-13-1/lic-lmadmin-overview/lic-lmadmin-threads.html.

- As of XenApp and XenDesktop 7.14, a single site can have different license products for example XenDesktop Enterprise User/Device and XenApp Enterprise Concurrent. You cannot have multiple editions for example XenApp Platinum and XenDesktop Enterprise.

Logon Times

- For tips to decrease logon times and decrease the Interactive Session time in Citrix Director read the following: – https://jgspiers.com/citrix-director-reduce-logon-times/

- Citrix Workspace Environment Management can help with reducing logon times by moving policy processing until after the user has logged on and the shell has loaded. See https://jgspiers.com/citrix-workspace-environment-manager/

Machine Creation Services

- Like PVS RAM Cache, MCS now in XenApp and XenDesktop 7.9+ has the ability to cache writes to RAM, reducing the amount of write IOPS that will hit your disk. This brings MCS in-line with PVS when offering a Write Cache to RAM solution. See https://jgspiers.com/machine-creation-services-storage-ram-disk-cache/

- Machine Creation Services uses Hypervisor controllers such as SCVMM, vSphere and XenCenter when deploying and upgrading/rolling back virtual machines. For this reason the Hypervisor management controllers need to be kept highly available. Keep in mind that when using XenServer MCS talks to the pool master, which must be highly available.

- Machine Creation Services can be used to deploy machines to Azure, unlike Provisioning Services.

- Machine Creation Services is a virtual only solution and does not deploy images to physical PCs like Provisioning Services can.

Misc

- Document every single setting and configuration change you make to your Citrix environment. Registry edits, Group Policy settings, application installs etc.

- User perception and adoption is critical to a successful deployment. Users expect an experience with virtual desktops similar to what they find on their home PCs and mobile devices.

- Whilst security is important, you do not want to be restrictive happy and deny access to as much as possible. This will only damage the user experience and reduce productivity.

- Logon times to desktops are very important.

- Current Branch for Business OS is supported by Citrix and recommended by Microsoft to be run in the enterprise. Updates that reach Current Branch for Business are generally available for Current Branch (typically home user) PCs approximately four months earlier. This allows Microsoft to reduce the risk of updates breaking the Current Branch for Business OS. Microsoft plan to support 2 CBBs each year.

- Citrix do not recommend updating directly to feature updates. Instead, uninstall the VDA and then after the feature update is complete run a Windows patch cycle. After that is complete install the VDA. Also review the image for newly installed services, Scheduled Tasks and UWP applications that should be disabled.

- Be aware that Microsoft OS feature releases such as Anniversary Update and Creators Update can result in new profile versions being introduced. Feature releases also normally come with additional UWP applications, services and Scheduled Tasks. You should review the differences in each version and make sure you keep images optimised.

NetScaler

- Use the TCP profile nstcp_default_XA_XD_profile with your NetScaler Gateway Virtual Servers for XenApp and XenDesktop traffic. This profile is designed for best performance of ICA traffic. It includes features such as:

- Use nagle’s algorithm – Reduces the number of packets that need to be sent over the network by combining small outgoing messages, sending them all at once. This is good for ICA, which is by nature a chatty protocol.

- Forward Acknowledgement – Works alongside TCP SACK and improves congestion control.

- Note: Make sure firewalls that sit in-between the NetScalers are not tearing down TCP sessions and recreating them without the required TCP flags originally set by the TCP profile. Nagle’s algorithm etc. use flags within the TCP packet to make the end-client aware that such optimisations are supported and can be used.

- Note: Make sure firewalls that sit in-between the NetScalers are not tearing down TCP sessions and recreating them without the required TCP flags originally set by the TCP profile. Nagle’s algorithm etc. use flags within the TCP packet to make the end-client aware that such optimisations are supported and can be used.

- For every assigned vCPU on your VPX appliance, assign 4GB RAM.

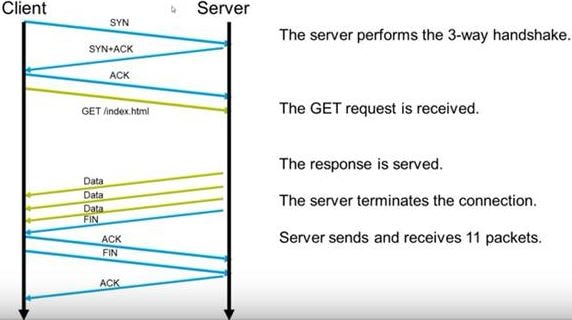

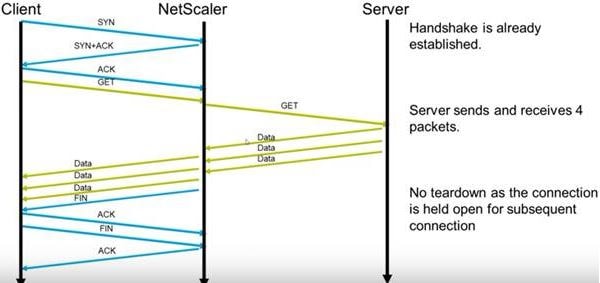

- NetScaler uses TCP Multiplexing for protocols HTTP/S, TCP and Datastream (SQL). NetScaler keeps connections between it and the back-end web servers open so that web-servers do not spend their time opening and tearing down connections. Multiplexing greatly reduces the amount of resource consumption on back-end web servers. The following pictures show a typical TCP transaction without NetScaler and then a TCP transaction with NetScaler and how it is optimised. 11 packets sent and received but only 3 packets of data sent. This is a lot of overhead.

Now the optimised way with NetScaler in the middle shows that the back-end web server only receives and sends 4 packets in total, whilst NetScaler does all of the work with the client.

Now the optimised way with NetScaler in the middle shows that the back-end web server only receives and sends 4 packets in total, whilst NetScaler does all of the work with the client.

- HTTP/2 is not enabled by default on NetScaler. To enable, edit your HTTP Profile and then attach the profile to your desired Virtual Server or Globally. TLS1.2 must be enabled on the Virtual Server.

- If using NetScaler VPX 12.x with vSphere, make sure to use VMXnet3 NICs.

- When using NetScaler clustering use the N+1 method. A single node failure should not result in negative performance for your users. You should size your cluster to ensure that all remaining nodes can comfortably handle the extra traffic until the failed node is back online.

- Be aware that the default TCP profile nstcp_default_profile is enabled on NetScaler. This profile does not enable features such as Window Scaling, Nagles Algorithm or Selective Acknowledgment which all modern clients support. For this reason, review and apply customised TCP profiles against your Virtual Servers to ensure connecting clients receive the best performance and back-end web server processing is reduced.

- NetScaler 12 has a new option to configure an extra Management CPU. This is only currently available for certain MPX models. Issuing an extra CPU for management will boost configuration and monitoring performance.

- As both a security practice and to avoid issues with appliances configured for High Availability, disable any unused interfaces.

- When Load Balancing StoreFront, use Source IP persistency. When Load Balancing Web Interface, use Cookie Insert.

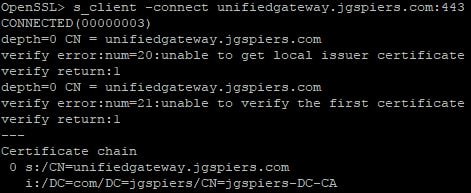

- Using OpenSSL, command s_client -connect fqdn:port is useful for debugging issues with SSL servers running on NetScaler. If there are any issues with certificates you’ll be notified. In this example, NetScaler cannot verify the self-signed server certificate because it was not linked to the CA Root certificate that issued it.

- You can get SSL performance improvements simply by upgrading NetScaler appliances to a 12.x build. Citrix performed testing with a VPX 1000 running 12.0 firmware which was able to process 2700 SSL ECDHE transactions per second versus a VPX 11.1 which was only processing 550 transactions per second.

Printing

- Ensure you optimise the printing experience for your users. See https://jgspiers.com/citrix-printing-universal-print-server/ for more information.

Provisioning Services

- See here for a list of best practices from Citrix.

- Don’t get caught up about if you should separate streaming and management traffic. It isn’t such a requirement anymore, especially with faster networking these days. I never sugggest doing this.

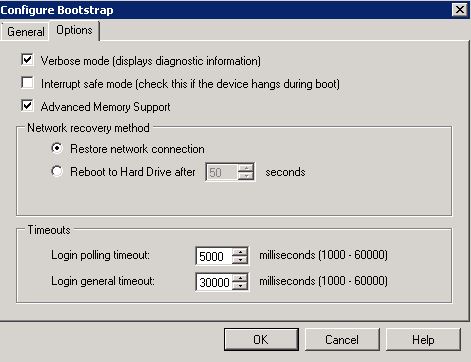

- Interrupt Safe Mode – Unless you actually have reason, disable this. I have come across (vSphere) environments that have had this option enabled but make no use out of it. I have seen 4 minute boot times reduced to 1 minute just by unticking this feature. Within the PVS Console, right-click each PVS Server -> Configure Bootstrap -> Options -> untick Interrupt safe mode (check this if the device hangs during boot).

- NetQueue – A feature of vSphere released in ESX 3.5, if you are receiving slow boots even with the Interrupt Safe Mode switched off then you may want to test disabling this feature (if it is enabled). NetQueue monitors the network load of all VMs and assigns queues to VMs deemed critical. To disable, via CLI, run set -s netNetqueueEnabled -v FALSE. Probably not as much an issue now as NICs run at speeds of 1GB+.

- Bootstrap – If you have multiple PVS servers in a load balanced setup, make sure each PVS servers bootstrap contains it’s own IP address at the top of the bootstrap list. It makes sense that if a Worker VM gets it’s bootstrap from one PVS server that it should attempt boot from the same one.

- E1000 NICs (vSphere) are not supported from ESX 5.x onwards.

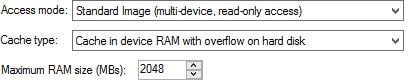

- Use Write Cache method Cache in device RAM with overflow on hard disk. Directing writes to RAM reduces the amount of IOPS underlying storage will face allowing you to fit more users on to that storage, or negate the need to load up on as many IOPS. It also provides your users with better performance.

- Citrix performed a basic test opening documents and performing general every day operations on a VM with writes directed to RAM vs writes going to disk. The results are were:

- Writes to RAM peak write IOPs – 7.9.

- Writes to RAM average write IOPs – 0.4.

- Writes to RAM peak read IOPs – 0.

- Writes to RAM average read IOPs – 0.

- Writes to disk peak write IOPs – 376.

- Writes to disk average write IOPs – 34.

- Writes to disk peak read IOPs – 243.

- Writes to disk average read IOPs – 4.5.

- Citrix performed a basic test opening documents and performing general every day operations on a VM with writes directed to RAM vs writes going to disk. The results are were:

- To reduce the risk of writes ever reaching disk, size the RAM cache appropriately and use the below values as guidelines:

- For Desktop OS, assign 256MB-512MB of RAM to cache.

- For Server OS, assign 2048MB-4096MB RAM to cache.

- Enable Offline database support.

- As of PVS 7.7, the PVS BNI stack is multi-threaded which allows for faster booting. Simply assign 2vCPU to your Target Device’s to make use of this optmisation. See this post by Nick Rintalan – Turbo Charging Performance with PVS 7.7

- VHDX, UEFI and ReFS can all have positive affects on boot performance and vDisk creation/merge times. See https://jgspiers.com/speeding-up-citrix-pvs-merge-boot-times-with-vhdx-uefi-refs/

- For more tips on improving boot performance, see this post from Trentent Tye – Lets Make PVS Target Device Booting Great Again (Part 1)

- BDM (Boot Device Manager) has a single stage in the boot process unlike PXE/TFTP because it does not need to download the PVS drivers since they are already in the image. TFTP boot via NetScaler however is a trusted method and one I personally prefer.

- Target Device’s will fallback to Cache on server if the primary cache method is not possible. Say for example your primary method is Cache in device RAM with overflow on hard disk but the hard disk is not present or is unconfigured. The XenDesktop Setup Wizard automatically creates and formats Write Cache disks for you whereas the Streamed VM Setup Wizard does not.

- Using XenServer 7.x+ and PVS 7.13+ you can use a new feature called PVS Accelerator. This feature reduces the time taken to boot Target Device’s, reduces PVS server CPU and reduces network traffic. What you do is assign either RAM or Disk space to XenServer which acts as the caching location for PVS images. Instead of PVS Target Devices reaching out to PVS for a stream, they use what is in the XenServer cache instead.

- For large scale deployments of PVS, Citrix recommend “pod designs”. At Citrix Synergy 2017 Jim Moyle spoke in a presentation about how he deployed an 80,000 user PVS farm with 3,000 users per pod.

- You should consider deploying a PVS server strictly for management. For example, one or two PVS servers used for general farm management to create vDisks, merge vDisk and publish vDisks. Since these types of operations are generally CPU intensive you do not want them to be happening on PVS servers that stream to production VMs as this can result in sluggish performance.

- For RAM sizing, capture the amount of RAM Target Devices consume over the period of say 1 week. RamMap is a good tool to see how much Active and Cached memory each vDisk is consuming. Take the average cached values from each vDisk and then add that number on to the Commited RAM value. Commited RAM can be easily viewed within Task Manager.

So for example, I have 2.5GB worth of Commited Memory and 2 vDisks that each consume 1GB of RAM in the Standby Cache. With a value of 4.5GB, add an additional 512MB on to that value and a 15% buffer. The resulting value should be around 7GB. Don’t forget that your Target Device VMs also cache objects in RAM, so therefore it is important to assign enough RAM to your Target Devices. If a Target Device has to read from disk rather than from RAM, that will be a read from the vDisk file which is a read from the PVS server. If you find that vDisks are consuming a lot of RAM in the PVS server Standby Cache, you should review the offending Target Devices and monitor their RAM consumption.

So for example, I have 2.5GB worth of Commited Memory and 2 vDisks that each consume 1GB of RAM in the Standby Cache. With a value of 4.5GB, add an additional 512MB on to that value and a 15% buffer. The resulting value should be around 7GB. Don’t forget that your Target Device VMs also cache objects in RAM, so therefore it is important to assign enough RAM to your Target Devices. If a Target Device has to read from disk rather than from RAM, that will be a read from the vDisk file which is a read from the PVS server. If you find that vDisks are consuming a lot of RAM in the PVS server Standby Cache, you should review the offending Target Devices and monitor their RAM consumption. - Make sure that the Anti-Virus installed on PVS servers is not configured to scan vDisk files on-access. This is bad for performance. vDisk files at the PVS server level should be excluded from on-access scanning completely.

- XenApp Advanced customers are not entitled to use PVS. XenApp Enterprise customers can use PVS but only to host pubished applications running on Desktop OS, it cannot be used to deploy desktops or published applications running on Server OS.

- XenDesktop VDI edition can use PVS only to deploy virtual desktops, not physical.

PVS 7.x Server Anti-Virus exclusions

Consider excluding the below files from anti-virus scans and/or on-access scanning and add these processes to allowed processes/whitelists.

- Pagefile.

- VHD, VHDX, AVHD and AVHDX files within PVS stores

- C:\Windows\System32\drivers\CvhdBusP6.sys (Server 2008 R2)

- C:\Windows\System32\drivers\CvhdMp.sys (Server 2012 R2)

- C:\Windows\System32\drivers\CfsDep2.sys

- C:\Program Files (x86)\Common Files\Citrix\System32\CdfSvc.exe

- C:\Program Files\Citrix\Provisioning Services\StreamProcess.exe

- C:\Program Files\Citrix\Provisioning Services\StreamService.exe

- C:\Program Files\Citrix\Provisioning Services\SoapServer.exe

- C:\Program Files\Citrix\Provisioning Services\Inventory.exe

- C:\Program Files\Citrix\Provisioning Services\MgmtDaemon.exe

- C:\Program Files\Citrix\Provisioning Services\Notifier.exe

- C:\Program Files\Citrix\Provisioning Services\BNTFTP.exe

- C:\Program Files\Citrix\Provisioning Services\PVSTSB.exe

- C:\Program Files\Citrix\Provisioning Services\BNPXE.exe

- C:\Program Files\Citrix\Provisioning Services\BNAbsService.exe

- C:\ProgramData\Citrix\Provisioning Services\Tftpboot\ARDBP32.BIN

Citrix have made recommendations that you exclude these files from being scanned by your anti-virus software. However you should consult with your security team first before setting such exclusions. Also as recommended you should perform scheduled scans on all files and folders including those excluded files and folders.

PVS vDisk/Target Device 7.x Anti-Virus exclusions

Consider excluding the below files from anti-virus scans and/or on-access scanning.

- Pagefile and Print Spooler directory

- vDisk Write Cache file (vdiskdif.vhdx or .vdiskcache)

- C:\Program Files\Citrix\Provisioning Services\BNDevice.exe

- C:\Program Files\Citrix\Provisioning Services\drivers\BNIStack6.sys

- C:\Program Files\Citrix\Provisioning Services\drivers\CNicTeam.sys

- C:\Program Files\Citrix\Provisioning Services\drivers\CFsDep2.sys

- C:\Program Files\Citrix\Provisioning Services\drivers\CVhdBusP6.sys

- C:\Program Files\Citrix\Provisioning Services\drivers\CVhdMp.sys

Citrix have made recommendations that you exclude these files from being scanned by your anti-virus software. However you should consult with your security team first before setting such exclusions. Also as recommended you should perform scheduled scans on all files and folders including those excluded files and folders.

Skype for Business

- Using Skype for Business on VDI or RDSH hosts should be complimented with the Skype for Business Optimization Pack to reduce overhead on virtual machines and improve communication experience. See https://jgspiers.com/skype-for-business-xenapp-xendesktop/

- When using Skype for Business, use the Audio setting Medium – Optimized for Speech.

- Tag Skype for Business IPv4 or IPv6 packets coming from your endpoints running the Skype for Business RealT

SQL

- Make sure the Read-Commited Snapshot option is enabled on the SQL database serving XenDesktop https://support.citrix.com/article/CTX137161

- Through Citrix testing, a SQL server providing a XenDesktop 7.11 database with 2vCPU could accomodate 16,000 session launches in 15 minutes. The same with 4vCPU could accomodate 32,000 session launches in 15 minutes. This roughly worked out at 9 session launches per second per vCPU.

- For database sizing, use the XenDesktop 7.x Database Sizing Tool

- Through Citrix testing, a XenApp 7.11 database hosting 40,000 users used under 150MB disk space. The same for XenDesktop used under 400MB. The transaction logs for 1,000 users logging on and off each day for 5 days resulted in 300MB of data consumption.

- Citrix in XenApp/XenDesktop 7.11 revisited core brokering SQL code and have improved brokering with latency. For example during Citrix testing the time to launch 10, 000 user sessions running XenDesktop 7.7 with 90ms latency was 44min 55seconds versus 7.11+ which was a much reduced 13min 10seconds. See XenApp and XenDesktop 7.11 through current: Latency and SQL Blocking Query Improvements

- Move secondary databases (Logging & Monitoring) to a separate SQL server. See https://jgspiers.com/moving-logging-monitoring-database/ for a guide to do that. XenApp/XenDesktop 7.7+ provides the ability to move and split these databases when creating your Citrix site.

StoreFront/Receiver for Web

- Install StoreFront on it’s own dedicated machine and see tweaks https://jgspiers.com/storefront-default-iis-page-optimizations/. Note that many tweaks can be performed via the StoreFront management console in the latest StoreFront releases.

- From Citrix testing, a StoreFront server configured with 8GB RAM and 4vCPU was able to handle up to 200,000 connections at a rate of roughly 15 logons per second.

- When adding a Delivery Controller to StoreFront, do not include periods in the display name.

- When Load Balancing StoreFront, use Source IP persistency. When Load Balancing Web Interface, use Cookie Insert.

Updating PVS Target Device Software/VM Tools

- Perform vDisk reverse image if updating PVS Target Device software below v7.6.1 documented with Hyper-V – https://jgspiers.com/pvs-target-device-software-update-hyperv-reverse-image/.

- Uninstall Target Device Software before VM tools upgrade/install. Install Target Device Software after VM tools upgrade/install.

- PVS vDisk reverse imaging using VMware vCenter Convertor https://jgspiers.com/pvs-reverse-image-with-vmware-vcenter-converter/. If using anything past 7.6.1, you don’t need to reverse the image.

User Environment Management

- Use Citrix Workspace Environment Management to decrease log on/log off times, manage I/O and processes more effectively and boost the overall user experience – https://jgspiers.com/citrix-workspace-environment-manager/

- Exclude lync.exe and AppVClient.exe from CPU Spikes Protection.

User Profiles

- Use features of Citrix Profile Management to mitigate issues such as large roaming profiles. CPM does just what Roaming Profiles does but with additional neat features. See https://jgspiers.com/citrix-profile-management-overview/ for an insight to CPM including tips.

- User Profile Management when used should have the UserProfileManager.exe process located in C:\Program Files\Citrix\User Profile Manager\ added to Anti-Virus process trusted lists. See https://docs.citrix.com/en-us/profile-management/5/upm-plan-permissions.html for more information.

- Make sure your profile store, whether running Citrix Profile Management or Roaming Profiles is using SMB3+. This is available from Windows Server 2012 onwards.

- Keep user profiles close to the machines users will be connecting to. This may involve routing users to their home datacentres to ensure they don’t download a profile from one end of the world to a desktop on the other end.

- If applications don’t require a profile, don’t use Roaming Profiles or Citrix Profile Management. It would be better to use a Mandatory Profile for quicker logons.

- Redirection also helps improve logon times since there will be no caching, downloading or streaming of profile files and folders.

- Be aware that Microsoft OS feature releases such as Anniversary Update and Creators Update can result in new profile versions being introduced.

- Citrix still recommend separate profiles for each profile version, they also do not recommend you mix Desktop profiles with Server OS profiles.

- Do not use DFS-R to replicate Citrix Profile stores. This is not supported by Microsoft. Instead you could use DFS-N and scale your profile store across several file servers. The individual file servers can reside on clustered hosts. Instead of hosting all your profiles on one or two servers, scale them out across multiple. This reduces impact density if one file server was to fail.

- Redirection improves logon times and reduces profile size. Citrix do not recommend redirecting the Start Menu.

- The Profile Streaming feature of Citrix Profile Management can help when users log on to machines and profiles are not local. Profile Streaming is not supported when App-V is in use.

VDI or RDSH

- Use VDI for users who require more resource, or users who need a persisted desktop experience. If you have general office workers who use the same set of applications every day such as word processing and emails, consider using RDSH. Using RDSH generally means more users for less compute.

Workspace Control

- Allow applications to follow users between devices. Optimise and standardise machnes to support Workspace Control. See https://jgspiers.com/optimising-the-citrix-receiver-experience/ for more information on Workspace Control.

XenApp and XenDesktop

- Adaptive Transport was fully released in XenApp/XenDesktop 7.13. This new HDX transport protocol runs on top of UDP and performs better as latency scales than traditional ICA over TCP. From one of the tests I performed, a 45MB file copy over UDP with 200ms latency completed 36 seconds faster than a TCP copy with 100ms latency. For more information and testing results see https://jgspiers.com/hdx-enlightened-data-transport/

- Disable HDX channels such as Client Drive Mapping, Printer Redirection, Audio redirection and so on if they are not being used. This will reduce bandwidth and footprint instead of keeping unused virtual channels active.

- When sizing Delivery Controllers, use the N+1 redundancy. A Delivery Controller can support up to 5,000 VDAs. If you have 10,000 then deploy 3 Delivery Controllers minimum.

- When adding a Delivery Controller to StoreFront, do not include periods in the display name.

- Citrix support sites that have a maximum of 20,000 users.

- Keep in mind that exported Citrix policies cannot be imported into another separate site. This is because policies contain site specific information.

- You can run older VDA machines within newer Citrix XenApp and XenDesktop sites. Just drop the Machine Catalog VDA level. For example, 7.6 (or higher) in a XenDesktop 7.13 site.

- Make sure reverse DNS is in place to support XenApp, XenDesktop and StoreFront which require reverse lookups.

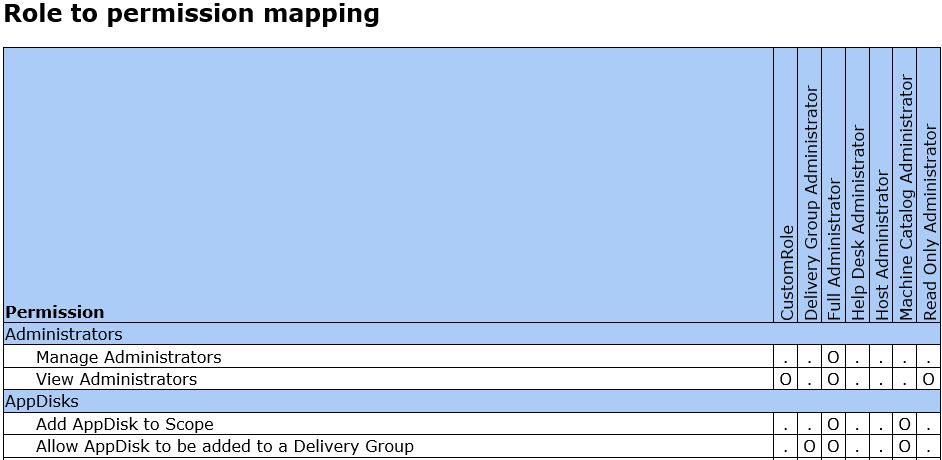

- Running the OutputPermissionMapping.ps1 script allows you to view in HTML or CSV format each default Role available in Citrix Studio including any custom created roles. The script can be found in C:\Program Files\Citrix\DelegatedAdmin\SnapIn\Citrix.DelegatedAdmin.V1\Scripts\.

- Through Citrix testing, a XenDesktop 7.11 Delivery Controller with 2vCPU could launch 5,000 sessions in 15 minutes. The same with 4vCPU could launch 10,000 sessions in 15 minutes. Testing was based on 2 Intel Xeon L5320 1.86GHz quad core processors. This roughly worked out at 5.5 session launches per second per vCPU.

- Through Citrix testing, a XenApp 7.11 Delivery Controller with 2vCPU could launch 3,000 sessions in 15 minutes. The same with 4vCPU could launch 6,000 sessions in 15 minutes. Testing was based on 2 Intel Xeon L5320 1.86GHz quad core processors

Steve Elgan

August 2, 2017I am yet again impressed by your content George. I’ll be spending the next several days comparing settings in my deployments.

George Spiers

August 2, 2017Thanks Steve, hopefully you pick up a couple of useful tips here to help you out 🙂

Trentent Tye

August 3, 2017Awesome job George!

“Citrix support sites that have a maximum of 20,000 users.”

I believe this maximum is now 40,000 users at 10,000 per zone with 7.14

George Spiers

August 3, 2017Thanks. Yeah in the documentation it says Local Host Cache can support an outage to a maximum of 40,000 VDAs in a single site, 10k per zone as you mention. However nothing about users!

Nick Panaccio

February 28, 2018Great stuff, as usual, George. One thing I’m curious about, though. You recommend no more than 1.5x overcommitment of vCPUs as the max, which in my case would be 96 vCPUs spread out through all VMs (32 physical cores). However, Citrix’s ‘Rule of 5 and 10’ blog basically says multiple the physical cores by 5 for XenDesktop, giving me 160 total VMs, if I’m using 2 vCPUs per VM. Unless I’m missing something here, wouldn’t that Rule of 5 and 10 assumption be thrown out the window on a completely full XenServer host with all 96 vCPUs being utilized?

George Spiers

March 1, 2018Hi Nick

I changed the wording on that as it was confusing and incorrect. 1.5x overcommit on a 32 physical core box would be 48vCPU.

The best way to get your answer is by running LoginVSI tests for all the different overcommit ratios. With older 3+ year old hardware 1.5-2x seemed to offer the safest results, but newer hardware has evolved and these ratios might still work well but maybe you could get better. 160vCPUs would be a 5x overcommit. I haven’t tried the rule of 5 to 10 myself but when I get my hands on some new hardware I’ll check it out.

Nick Panaccio

March 1, 2018Gotcha, thank you! And my mistake – I was using the total threads and not cores when doing the math the first time around.

David Huber

May 17, 2018HTTP/2 causes problems with the Citrix Receiver for IOS. I had to disabled it because we were getting an error message “too many HTTP redirects”.

PC Web

August 16, 2018Thanks for sharing !

Jason

October 2, 2018Hey George,

You state to reinstall the VDA after upgrading the VMWare tools. Are you saying completely uninstall the VDA the reinstall?

George Spiers

October 8, 2018Yes exactly, also note that it is a recommendation. You may experience zero issues not doing it.

Anonymous

October 26, 2018tweaks https://jgspiers.com/tweaking-citrix-directory-dash-of-monitoring/

must be tweaks https://jgspiers.com/tweaking-citrix-director-dash-of-monitoring/

George Spiers

October 26, 2018Thanks, that was a typo.

Pingback: Tons of Citrix tips & tricks, best practices, etc. – Tim's Virtual Blog

Anonymous

March 27, 2019Waat are the benefits of keeping the Hypervisors dedicated to RSDH or VDI VMs from the hypervisors that will host SQL, Domain Controllers and DHCP servers..?

George Spiers

April 2, 2019It makes for easier capacity planning/management. Normally in an enterprise environment you will have Hypervisors even dedicated to SQL. I get not every organisation has this benefit though!

DougR

April 2, 2019Thanks George

Pingback: Citrix Troubleshooting 101: Frequently Asked Questions | eG Innovations

Sarah

March 4, 2021Hi, George,

Here’s an interesting challenge… Citrix 7.12 on a new VM server Windows 2016 and Chrome or Edge App to pull up the web access to Oracle JDE E1 9.2….. need to have printing configured. Looking at Universal Print setup now. I need Citrix 7.12 to Print to Default printers for locations that cannot use VPN directly, but can connect Citrix Web access Full Version with Citrix Workspace 1802 or higher. Tight budget for a handful of users who are stuck with what they have for now in a plant that needs to keep running. I appreciate any tips, tricks, or ideas. Kind regards, ~Sarah_oldie&newbie_